VR CO-CREATION WITH RL PT. 1

spring 23'

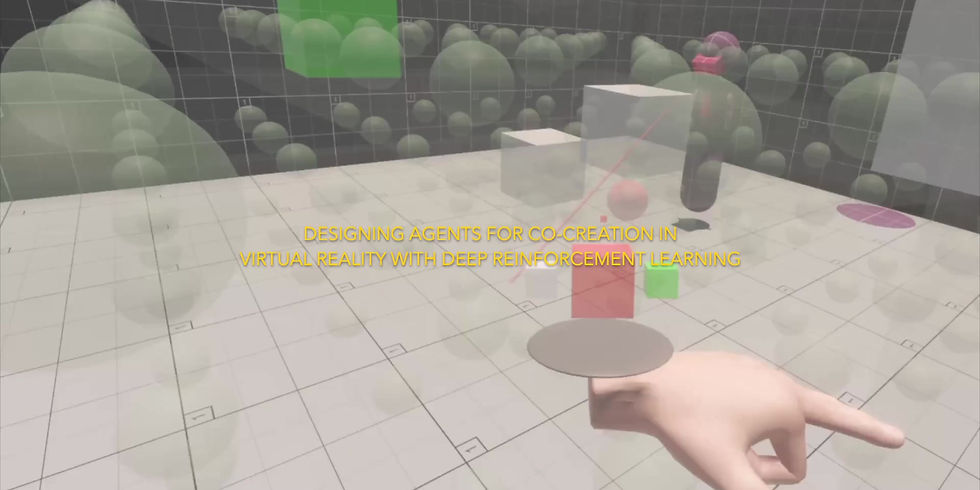

For my final MSc thesis, I challenge myself to confront the confluence of immersive VR technologies, deep learning, human-AI interaction, and 3D generative design. In order to do so, I conceptualized the formulation of a VR application that in its development will help reveal what future questions and further areas of research might emerge from connecting these technologies together. In my final application, given a target 3D model, an agent is trained with deep reinforcement learning (RL) and generative adversarial imitation learning (GAIL) to construct a voxelized version of the design while embodying a virtual avatar similar to a VR user. A VR user can also occupy this design space and contribute to the block-building design exercise of the target 3D model, implicitly co-creating with the agent. Too, the user's actions within the space can be recorded and used to augment the agent's trained behavior. This thesis not only reflects on the final outcome of this prototype but uses the development process to explore how future developers might consider virtual reality platforms and more open-ended scenarios involving humans and AI.

Due to my thesis being rather large, I split it up into two parts, where Part 1 looks at the core development of the VR environment and the RL agent, and Part 2 explores methodologies created for evaluating the process of RL training and its variants in this particular context.

Mapping the multiple disciplines and their overlaps to help define my own prototype

The inspiration for this thesis came as a result of my many interests explored during my graduate studies across deep learning, VR development, and design interaction. Furthermore, culturally we see the large impact AI has on the creative landscape through generative models. That being said, there are still ways to go in seeing progress with machine learning regarding 3D design as well its impact on AI avatars in VR. But surely, we can speculate as anything to do with AI these days is right around the corner. In this thesis, I wanted to ask many questions as to what immersing oneself in virtual reality might mean for future virtual platforms, when they can be so tied to the state-of-the-art regarding deep learning. Simulation in game engines and deep learning have a historical relationship suggesting that human-AI relationships in the future might largely be formed entirely virtually.

Extended footage co-creating with one of the trained agent variants

Within the co-creation space lies a Block Matrix that hosts implicit interactions between the user and agent within the VR environment. But perhaps more so, it is an essential framework for training the agent on how and what to design. The Block Matrix itself is quite simple, being a cubic grid of ghosted blocks that can be converted to different colors between white, red, green, and blue. To activate these blocks, the VR user utilizes the center of their gaze as a cursor and can cycle between block types using the VR hand triggers and also deciding to place the current toggled color over the gazed block. In an attempt to bring the user and agent closer, the agent is designed with similar functionalities.

Layout of the VR co-creation environment

There are two different problems that are required for the AI agent to solve: firstly, to control the VR avatar within the virtual space, and secondly, to understand the target 3D model and make decisions for placing blocks within the space. At first, I approached the problem as one machine learning problem, but through some iteration, realized the best way to train and model these two distinct problems is to design two separate training environments, and for inference, utilize two neural networks with their own observations and actions for controlling the agent.

I call these two networks or agents, the Guiding Agent and the Creator Agent. The Guiding Agent is trained to learn the voxel arrangement of the target 3D model. The Guiding Agent needs to be retrained per different input 3D models. The Creator Agent learns how to move within the space, similar to the human VR user. It serves more as the VR interface for the AI's design motivations. During inference, the Creator Agent selects a target block with its gaze, and once doing so, requests a new target from the Guiding Agent until completion.

Relationship between the two trained networks during inference

The Guiding Agent observes a 7 x 6 x 7 Block Matrix as a 251-length binary vector, expressing the spatial condition of the current voxel form. Its actions are per step to decide a block type, ranging from do nothing, blank, to a block color, and a 3D voxel coordinate. It is rewarded positively during training for each newly correct block placed, and negatively rewarded for any incorrect or redundant block placement.

Converiting the target 3D model into a voxel form withing the Block Matrix

The Creator Agent takes on a more explicit role, controlling its avatar through the movement and rotation of its body, and rotation of its head. The Creator Agent observes its own transform parameters like rotation and world position, as well as the position of the target block given by the Guiding Agent, and the current dot product between the agent's head to the target. This dot product is used to reward the agent to suggest that it gaze towards the target block, like a human VR user would.

Creator Agent training environment

Guiding Agent training environment

A key aspect of this thesis is the exploration of creating different agent variants via augmentation with demonstration. This was the original inspiration for making the RL agent control a VR-similar avatar such that a human can operate naturally within their familiar VR controls and record their actions to augment RL training. That being said, this pertains more directly to the Creator Agent. Because the Guiding Agent is more concerned with design and reconstruction, demonstrations for the Guiding Agent is done via a point-and-click interface, similar to working within Unity.

Demonstrations allow for the exploration of weighting human behaviors into the agent's final behavior and suggesting design styles that are not made explicit within the environment or reward function. Part of this goal is to 'design' RL agents to make them mimic a more human way of doing things. This is important because within virtual environments or games, the agent's often can outperform human tasks at an unreliable level, and especially in VR where agent details are more impactful, can move in unwanted ways.

Creating demonstrations for the Guiding Agent

Creating demonstrations for the Guiding Agent

For the Guiding Agent, five variants were developed based on a vanilla agent trained without GAIL:

-

Base 1, a vanilla benchmark agent

-

Base 2, has the same parameters as Base 1 and is trained starting with Base 1's weights

-

Red 1, a demonstrated style using only Red blocks and constructing from bottom to top with light demonstration weight

-

Red 2, same demonstration as Red 1 but just weight more strongly

-

Multi, a style of using different colors for the sides of the target 3D model with the demonstration weighted strongly

Rewards over training steps for the Guiding Agent Variants

For the Creator Agent, four variants were developed based on a vanilla agent trained without GAIL:

-

Base, a vanilla benchmark agent

-

Demo, an agent trained with strongly weighted human demonstration

-

Base-Demo 1, a lighter weighting of the demonstration, trained using the Base agent's weights

-

Base-Demo 2, a strong weighting of the demonstration, trained using the Base agent's weights

Rewards over training steps for the Creator Agent trained with demonstration versus without

Building this prototype allowed me, my peers, and the research committee to help better understand many facets of future relationships between humans and AI within virtual interactions. What I have done is set up a technical and theoretical framework that can serve as a template for exploring these intricate and undefined questions for the future.

My own personal reflections as a co-creator in the VR environment with the agent are that of great fascination - that being said, I spent more time observing the agent rather than co-creating with it. The more open-ended objective of co-creation still needs further investigation, how we train an agent with subjective capabilities while also being comfortable to be used alongside our familiar human interfaces. I hope my contribution can help bridge the gap between ML researchers, game developers, and 3D designers such that they can deliberate together upon these problems and seek novel solutions.

The architecture of the co-creation environment

For more detailed qualitative results and reflection, visit the page for Part 2 of my thesis recap. In Part 2, I suggest novel methods for exploring agent variants in RL and connect these evaluative methods to the question of more open-ended design agents in machine learning.

It should also be noted that this is a highly summarized version of my thesis methods without going too far into the implementation and quantitative variation between agents. My thesis is under review for publication on the CMU thesis repository, which I will link to upon later publication.